Why tokenomics decides which AI agents survive production

Intelligence is the easy part now. Intelligence at sustainable unit cost is the moat.

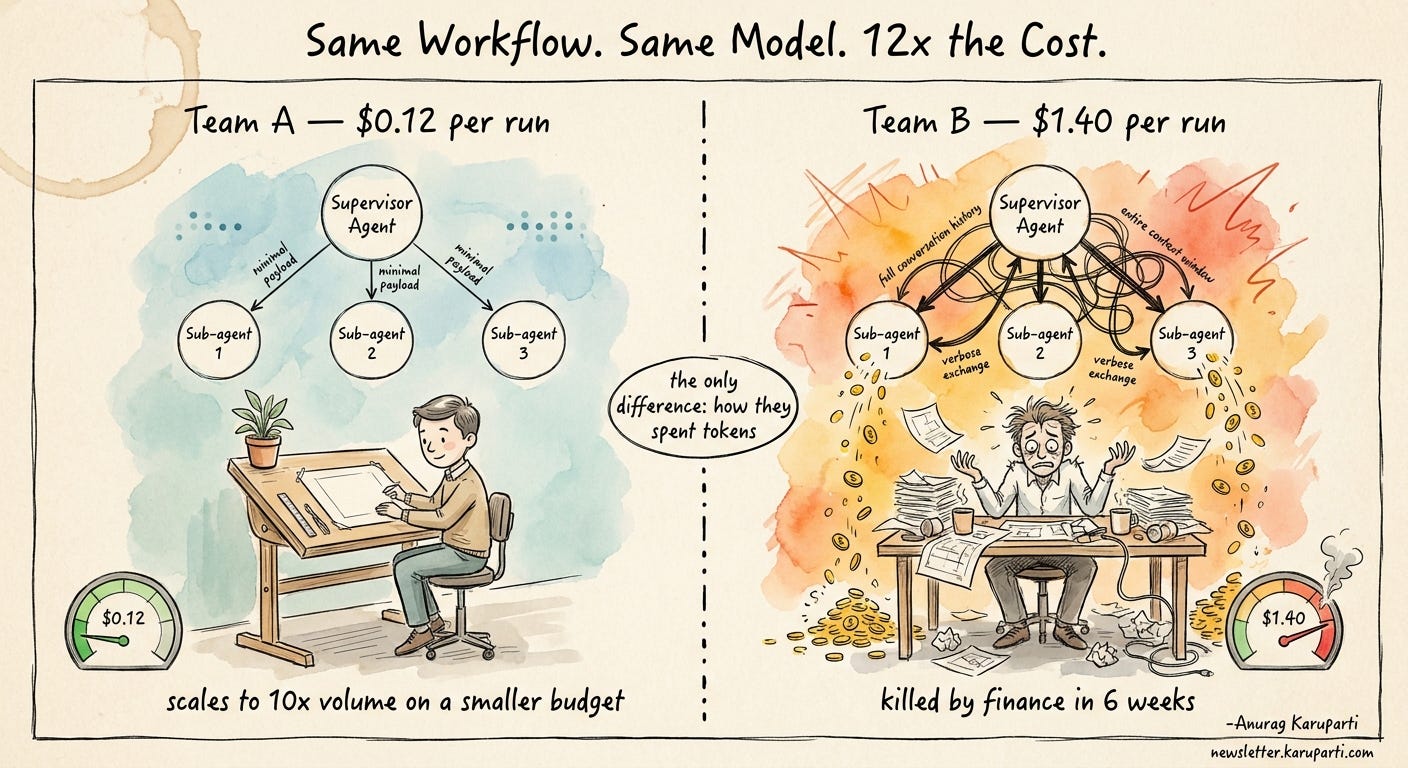

Two teams deployed the same multi-agent workflow last quarter. One costs $0.12 per run. The other costs $1.40. Same model. Same outcome. The only thing that changed was how they spent tokens.

The team running at $1.40 had a working POC, a happy demo, and a board deck full of green checkmarks. Six weeks into production, finance pulled the plug.

The team running at $0.12 is now serving ten times the volume on a smaller infrastructure budget than the original POC.

This is the part of the agentic AI conversation that almost nobody is having out loud.

We talk about model quality, evals, context engineering, orchestration patterns.

We do not talk about the unit economics of a single agent run, even though that number is the only thing that decides whether the system gets to live past the pilot.

Tokenomics is not an optimization concern you handle later. It is the architecture constraint that decides whether your project ever ships, scales, or survives the first real CFO review.

This week’s edition is about why.

First, what is tokenomics in AI?

Tokenomics in AI is the cost structure of running large language models, where every interaction is priced by the unit of work the model actually does: tokens.

A token is roughly three quarters of a word, and every prompt you send, every document you stuff into context, every tool output the model reads, and every word it generates back is metered and billed.

In a traditional software system, your unit cost is roughly fixed. A request hits an API, runs some logic, returns a response. Compute is cheap and predictable.

In an AI system driven by LLMs, your unit cost is variable, and it scales with how much the model has to read and write to do its job.

That single shift is what makes AI economics behave more like a utility bill than a software license.

The numbers are no longer abstract. Google now processes around 1.3 quadrillion tokens a month, a 130-fold jump in just over a year.

Deloitte’s 2026 CFO guidance flags AI as the single fastest-growing line item in enterprise technology budgets, eating a quarter to a half of IT spend at the firms leaning in hardest.

Unit token prices are falling, but total enterprise spend is climbing because volume is climbing faster than price is dropping.

Tokenomics is the discipline of designing systems so that this curve works in your favor instead of against you. It covers four cost surfaces:

Prompt tokens. Everything you send into the model: instructions, system prompts, user input, retrieved documents, tool outputs. A two-thousand-token system prompt prepended to every call is a tax you pay on every interaction for the life of the system.

Context tokens. The conversation history, scratchpad, and accumulated state the model carries between turns. In agent systems this grows fast.

Reasoning tokens. A newer line item. Models with extended chain-of-thought consume tokens for the thinking they do internally, often invisible to the user but very visible on your invoice.

Output tokens. What the model writes back. Usually the smallest bucket. Almost always the easiest to control.

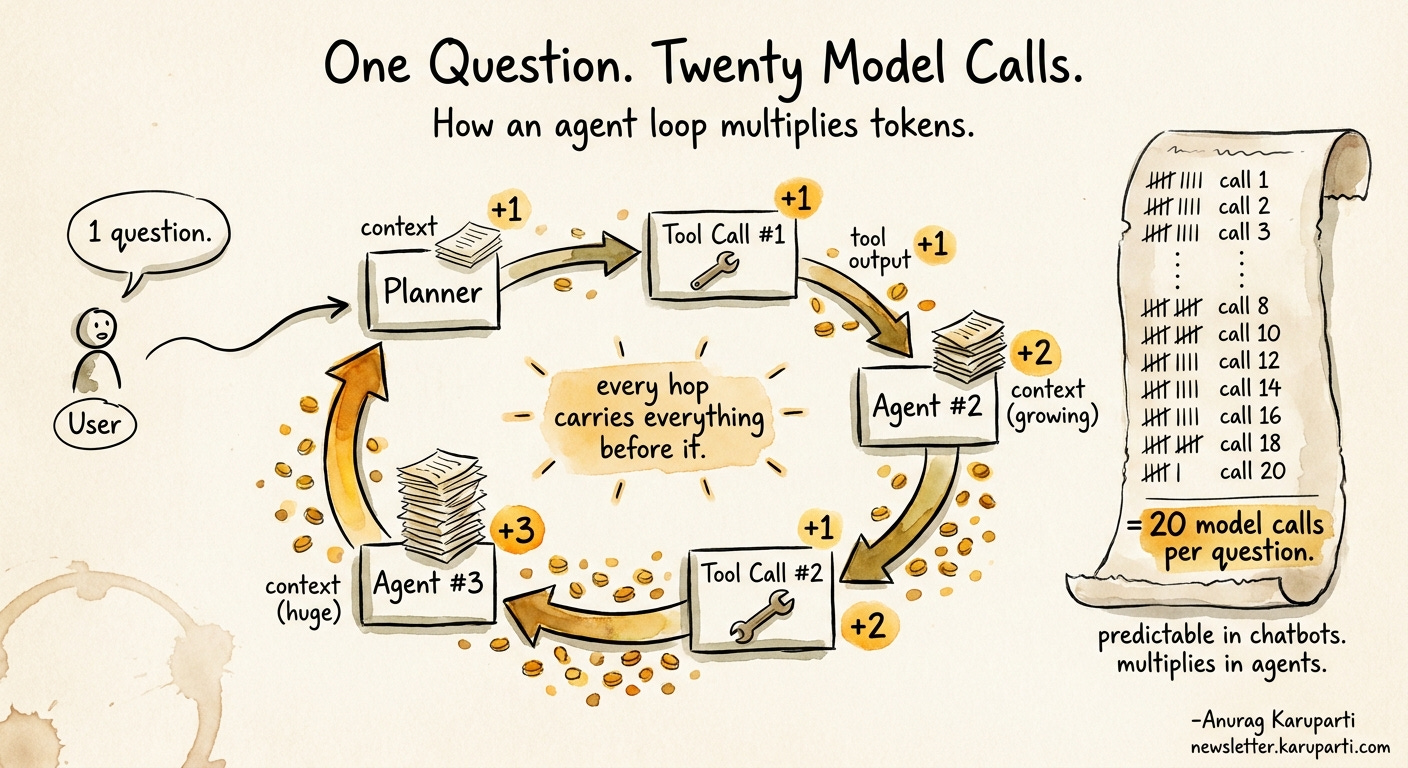

In a chatbot, these four buckets are predictable. In an agentic system, they multiply, and that is where most enterprise AI projects quietly bleed out.

The token multiplier problem in agentic AI

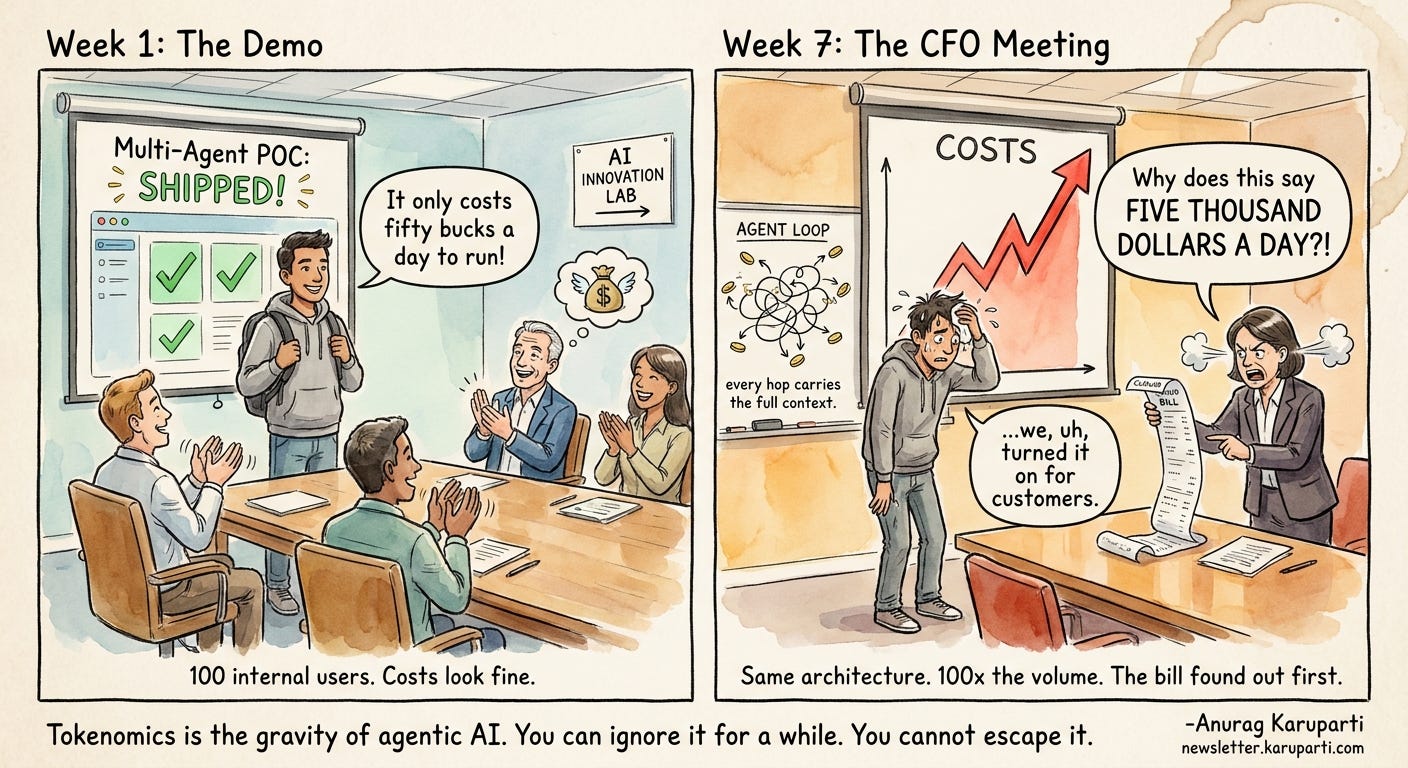

Most teams learn tokenomics the hard way. They build a chatbot, see a clean cost-per-call, and assume agentic systems will scale the same way. They will not.

A single LLM call has three token buckets: the input prompt, the context you stuff in, and the output you get back. Predictable, easy to model, easy to budget.

An agent run is a different animal. Every hop multiplies tokens. The planner reads context, decides on a tool, calls the tool, reads the tool output, passes it to the next agent, which reads its own context, decides on its own tool, and on it goes. By the time a five-step agent loop finishes, you have not made one model call. You have made eight, twelve, sometimes twenty, each one carrying the accumulated context of every step before it.

Run the math on a real workload. Ten thousand users, five thousand tokens per agent run on a naive design, twenty thousand on a heavy one. At enterprise volume, the difference between a thoughtful architecture and a lazy one is not a percentage. It is an order of magnitude.

The painful part is that almost every team discovers this after the POC ships. The pilot ran on a hundred internal users. The cost looked fine. Then marketing turned it on for the customer base, and the bill went from a rounding error to a line item the CFO wants explained in a meeting you did not want to be in.

Tokenomics is the gravity of agentic AI. You can ignore it for a while. You cannot escape it.

The three architecture decisions tokenomics forces

Once you accept that token cost compounds with every agent hop, the architecture decisions stop being style choices. They become survival choices. There are three that matter most.

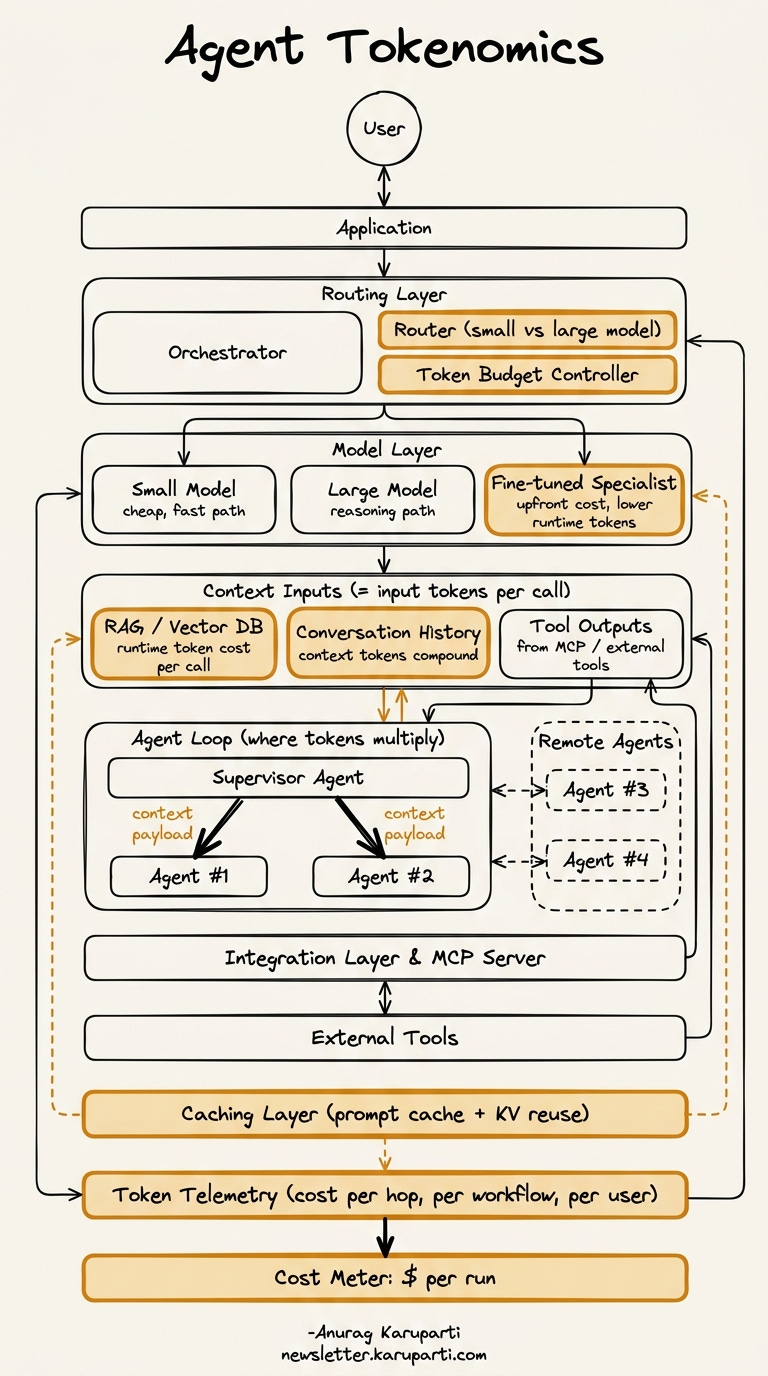

The diagram above is the map. Every amber box is a place where token cost is either gets compounded or controlled.

Cost gets decided at the top, in the routing layer (small model vs. large model) and the token budget controller.

Cost gets compounded in the middle, inside context inputs (RAG, conversation history, tool outputs) and the agent loop, where every supervisor-to-sub-agent handoff carries a context payload that the next agent has to re-read and pay for.

Cost gets controlled at the bottom, by the caching layer, the token telemetry, and the cost meter that turns all of this into a dollar figure per run.

The survival question is simple: how much of the amber in your architecture is working for you (caching, routing, telemetry, budgets) and how much is working against you (uncapped context growth, full-history handoffs, no per-hop budgets)?

The next three sections walk through the three decisions that decide how much amber is working for you and how much is working against you.