Ep. 3 – Platform - The Foundation of an AI CoE

Part 2: Companies with centralized AI platforms eliminate 30-50% of compliance overhead. Here's how to build one.

If Part 1, I made the case for why most AI CoEs fail, this post is about the first multiplier: Platform.

Let me be blunt: Your CoE can have the best data scientists and prompt engineers in the world, but without a repeatable enterprise AI platform, they’ll spend their time troubleshooting one-off requests instead of scaling AI across your organization. Talent without infrastructure doesn’t multiply. It just gets stretched thin.

What Platform Really Means

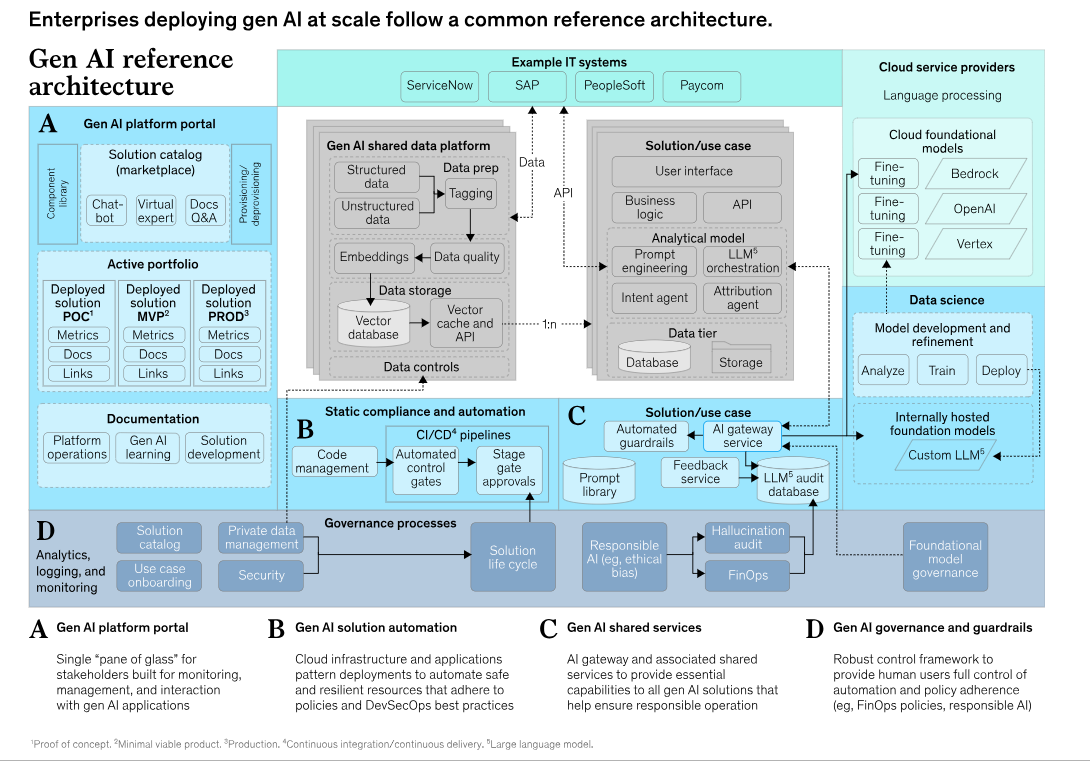

When I say platform, I don’t mean “a collection of tools.” I mean a governed, reusable, enterprise-grade architecture that every AI workload plugs into. Think of it as the operating system for enterprise AI.

At minimum, that platform should deliver:

Secure Self-Service Portal: The Engine for Scale

Developer Enablement

Single access point for all validated GenAI products and services

Preapproved application patterns (chatbots, RAG, agent workflows) deployable in minutes

Preconfigured for enterprise-grade security and scale (AI Landing Zones)

Simple point-and-click provisioning with clear documentation and training modules (e.g., Infra-as-a-code, GitHub Solution Accelerators)

Contribution model so developers can add new libraries and improve patterns

Access to Management Services

Centralized control plane for observability, compliance, and cost management

Dashboards to track model performance and operational metrics (token consumption and cost usage)

Built-in budget controls to prevent cost overruns

Tailored governance by environment (e.g., lower budgets for sandboxes, higher for testing/production)

Integrated approvals, audit logs, and reporting to manage hundreds of applications consistently

Open Architecture to Reuse GenAI Services

Maximize reuse to scale efficiently and reduce total cost of ownership

Build on an open, modular architecture that supports integration and easy swap-outs.

Focus on two categories of reusable capabilities:

Application patterns for common archetypes (knowledge management, customer chatbots, agentic workflows)

Data products and libraries such as RAG, GraphRAG, chunking, embeddings, reranking, prompt enrichment, and intent classification

Provide these core capabilities as shared services instead of rebuilding each time

Avoid relying on a single provider for all GenAI services. No one platform fits every need, and this limits access to best-in-class capabilities

The platform’s role is to enable integration, configuration, and access across providers, while keeping proprietary capabilities in-house where they deliver true advantage

For instance, Microsoft’s new Agent Framework is an open-source SDK that provides an orchestration layer for agents built on any provider including Azure AI Foundry, CrewAI, AWS, and GCP, enabling easy integrations.

The core building blocks of an open architecture are infrastructure as code (e.g., Terraform, Bicep templates) combined with policy as code, enabling changes to be made at the core level and adopted quickly and seamlessly by solutions running on the platform.

The platform’s libraries and component services should be supported by clear, standardized APIs to coordinate gen AI service calls.

Key Components:

AI Gateway + Guardrails – A single entry point where governance, compliance, and security checks are automatically enforced.

Golden Paths – Reusable blueprints (chatbot frameworks, GraphRAG patterns, multi-agent workflows) with monitoring and security pre-built.

Evals-as-Code – Automated evaluation pipelines for compliance, safety, and performance testing—no manual checks needed.

Self-Service Portals – Enable business teams to launch pilots in hours by abstracting complexity and removing CoE bottlenecks.

FinOps Discipline – Built-in monitoring, quotas, and dashboards to control AI costs and maintain predictable spending.

Why Platform First?

Because without it, every new project is bespoke. Every team reinvents the wheel. Every CIO ends up asking, “Why does it take us six months to ship a simple chatbot?”

With a platform-first CoE:

Scale doesn’t break governance.

Compliance isn’t a manual process.

Innovation accelerates instead of stalls.

As stated in the McKinsey blog, a unified platform ensures products meet compliance requirements efficiently, eliminating 30 to 50 percent of nonessential work.

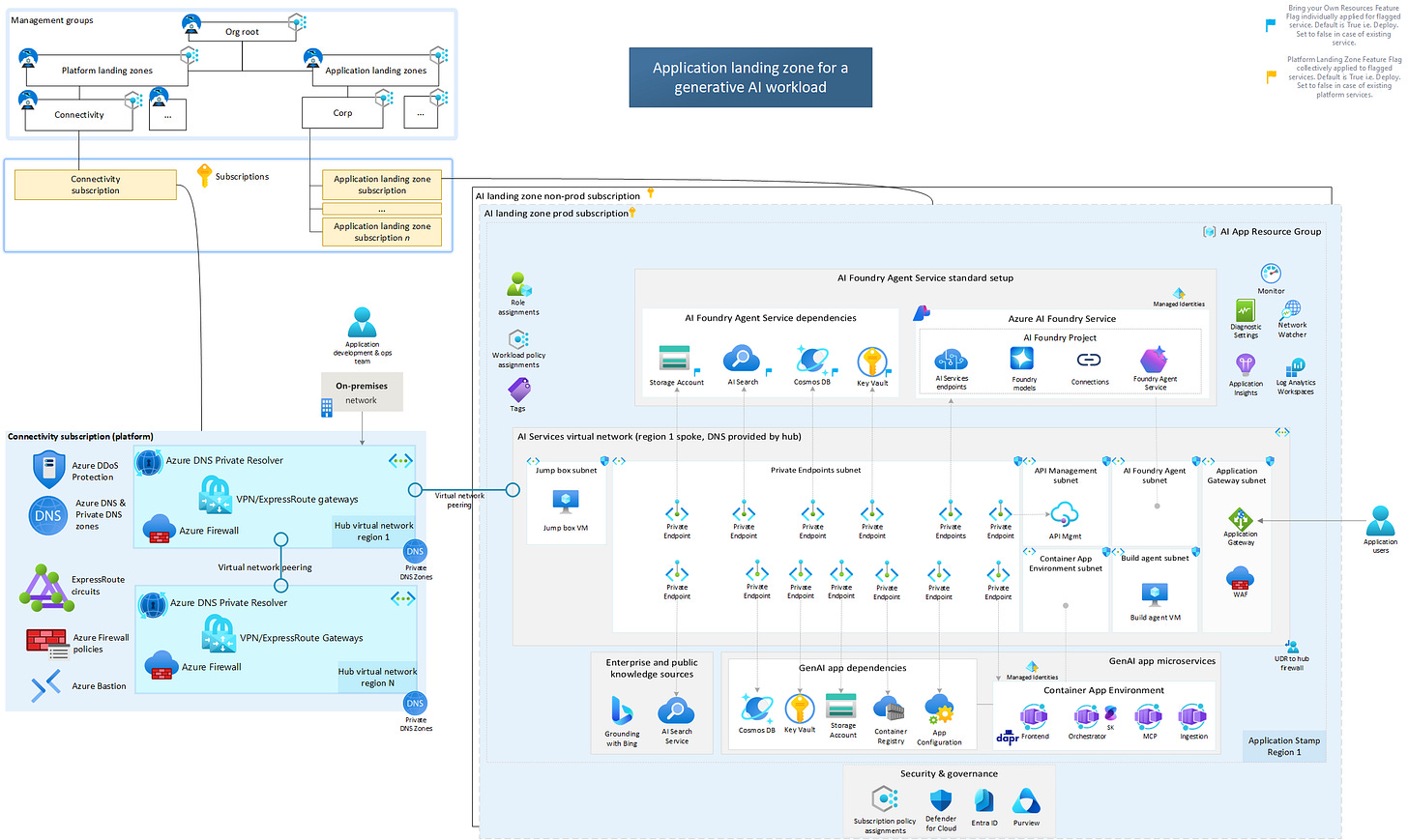

AI Landing Zone

The AI Landing Zone is a critical platform component that provides a standardized, enterprise-ready foundation for deploying AI applications with security and compliance built in from the ground up.

Available through Microsoft’s official GitHub repository, it includes production-ready infrastructure-as-code templates in both Bicep and Terraform, enabling organizations to rapidly deploy secure, resilient AI solutions based on Azure Verified Modules and Cloud Adoption Framework best practices.

Should the AI CoE Own the Enterprise AI Platform?

This question comes up in almost every customer discussion. The honest answer: it depends.

When it should:

Platform as a multiplier: If the CoE’s mandate is to provide reusable capabilities (APIs, RAG services, governance, monitoring, model registries, etc.), then it makes sense for it to own the platform. That way, every BU/project team can just plug in instead of reinventing the wheel.

Strong guardrails are needed: Central ownership avoids “shadow AI” sprawl. The CoE can enforce security, compliance, and cost optimization by design.

Early-stage maturity: When the enterprise is still learning, having a central steward ensures you don’t end up with five different AI stacks running in silos.

Shared infra economics: Expensive GPU clusters, managed services, licensing deals (e.g., OpenAI, Anthropic, Azure AI) are often more efficient if pooled and centrally managed.

When it should not:

Becomes a bottleneck: If the CoE tries to police every workload, it slows innovation. Teams stop experimenting because the central team has long queues or rigid processes.

Platform ≠ product fit: Business units with very specialized needs (say, real-time trading, healthcare imaging, or gaming NPCs) may need custom infra, models, and data pipelines that don’t fit neatly into the “enterprise AI platform.” Forcing them into one platform can hurt outcomes.

Mature org with decentralized talent: If your org already has strong AI engineering teams embedded in business units, the CoE should enable them, not own everything. In these cases, the CoE provides standards, best practices, and shared services but leaves actual platform choices to BUs.

Innovation velocity vs. standardization tradeoff: Sometimes, letting teams run lightweight, domain-specific AI stacks gives more speed and creativity than a centrally owned platform.

The hybrid reality:

The sweet spot most orgs land on is federated:

CoE owns the “golden path”: enterprise AI reference architecture, shared RAG, observability, model and agent registry, security/compliance guardrails.

Business units own their domain-specific extensions: they can go off-road if needed, but they inherit baseline platform services and policies (for e.g. AI Landing Zones).

Think of it like cloud landing zones: the CoE sets up the secure paved roads, but doesn’t dictate every app you run on them.

This is Part 2 of a 4-part series. In Part 3, I’ll shift gears to the second multiplier: People. Because even the best platform fails without the right operating model and roles in place.

Credits to McKinsey and Microsoft for the thought leadership referenced here.

References:

McKinsey Blog: Overcoming two issues that are sinking gen AI programs

Microsoft Learn: AI Center of Excellence training path

Microsoft Learn: Cloud Adoption Framework - AI Center of Excellence

Building on Part 1, your take on platform here is absolutly critical for AI CoEs.