Mythos and Cyber Models: What does it mean for the future of software?

For the first time ever, a model was intentionally made worse before release. When two frontier AI companies treat their own models like classified technology, the rest of us need to pay attention.

Every week there is a new model, a new benchmark, a new funding round.

But this week is different. For the first time in model launch history, a company intentionally made its model worse on a benchmark before releasing it to the public.

That company is Anthropic. And if you build, secure, or operate software at scale, you need to understand why they did it.

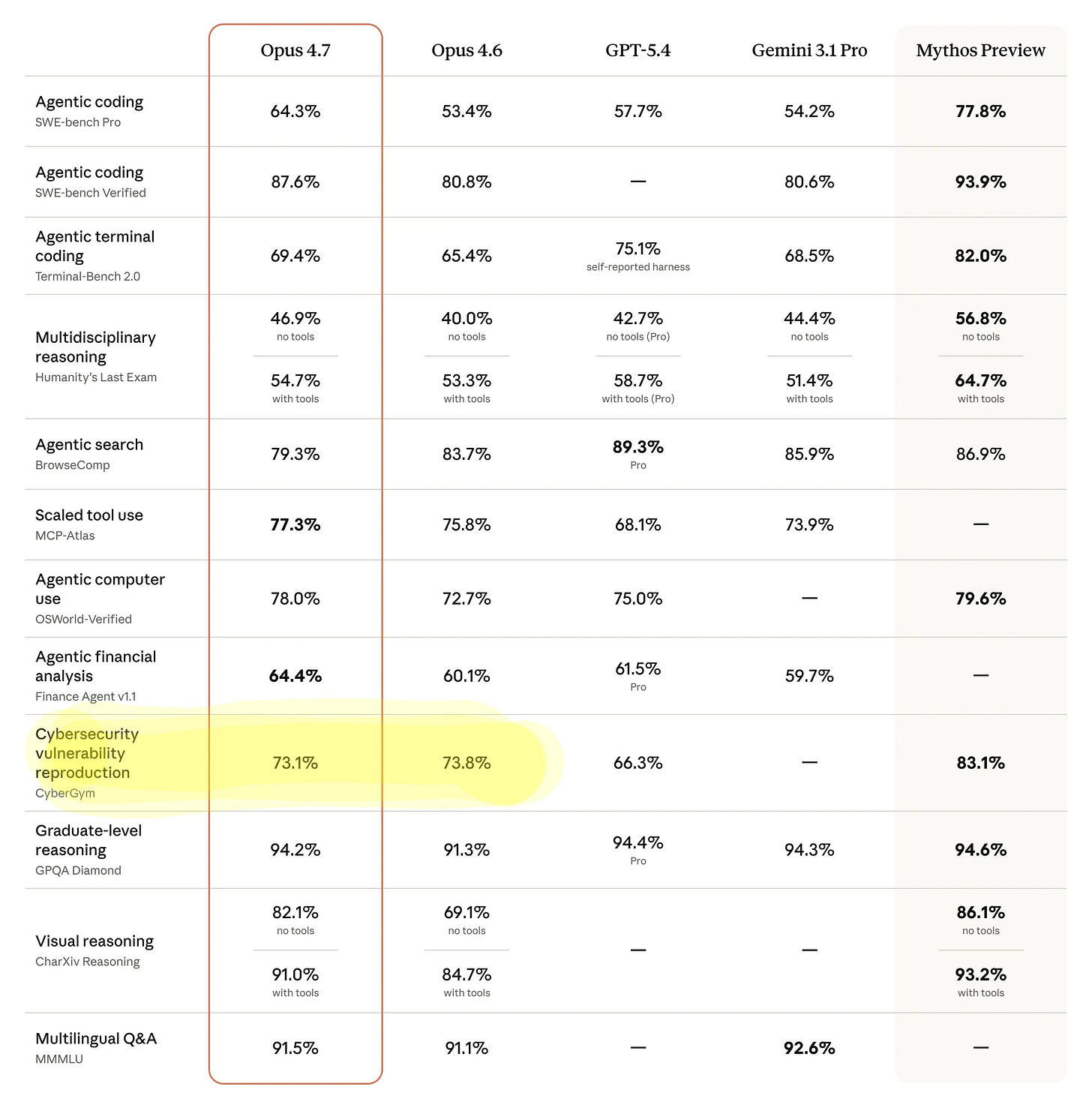

Opus 4.7 was released yesterday. As you can see below, Opus 4.7 shows lower performance on the CyberBench benchmark compared to Opus 4.6.

Last week, Mythos was released to a small set of organizations that operate critical software so they could harden their systems.

If you haven’t heard about Mythos yet, it is an extremely powerful model capable of identifying vulnerabilities in highly secure software systems.

It found a 27-year-old bug in OpenBSD, one of the most security-hardened operating systems in the world. It found a 16-year-old flaw in FFmpeg.

It chained multiple Linux kernel vulnerabilities to go from ordinary user access to full machine control.

That is what scares me. Your bank runs on this software. So do hospitals, power grids, and financial markets. If these models reach the wrong hands, the consequences could be catastrophic.

If you want a solid explainer on why Mythos matters beyond the technical benchmarks, I would highly recommend watching Hank Green’s video “You Actually Do Need to Understand Mythos” on YouTube. He brings on a cybersecurity expert Sherri Davidoff and together they do an excellent job of making this accessible and putting the broader implications into perspective.

A lot of the thinking in this post was sharpened by that conversation.

The Shift: From Potential to Practical Impact

Claude Mythos is demonstrating capabilities that go beyond incremental improvements in reasoning or coding performance.

This model is not just answering questions or generating code snippets. It is actively analyzing real-world systems, identifying previously unknown vulnerabilities, and in some cases combining them into working exploit chains.

It scans production-grade software systems. It identifies zero-day vulnerabilities that no one has documented. It chains multiple weaknesses into viable attack paths. And it operates at a scale that no human team can match.

This is not hypothetical or speculative. This is already happening in controlled environments today.

OpenAI is Thinking the Same Thing

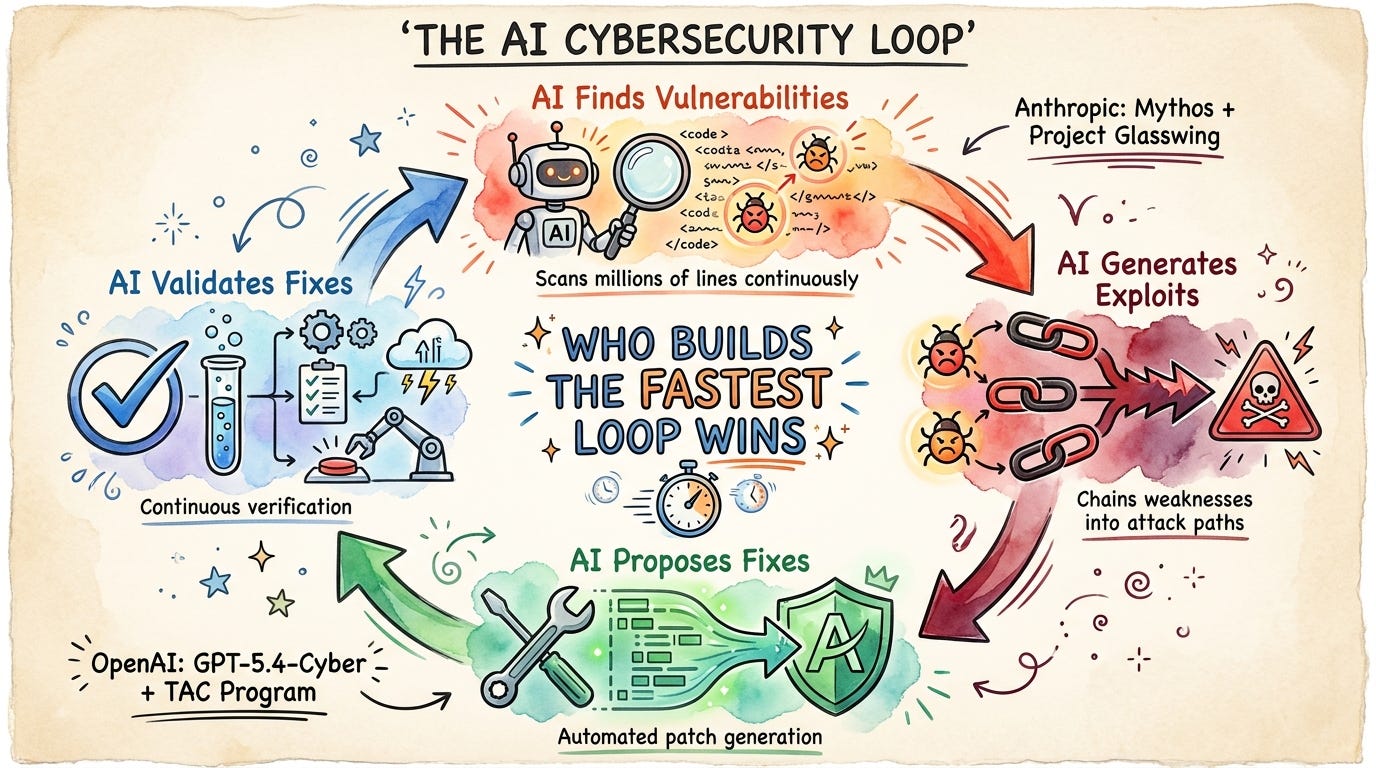

Anthropic is not the only one pumping the brakes. Just days after Mythos went out to its 40 handpicked partners under Project Glasswing, OpenAI released GPT-5.4-Cyber, a variant of its flagship model fine-tuned specifically for defensive cybersecurity use cases. It is only available to vetted participants in their Trusted Access for Cyber (TAC) program.

The pattern is clear. Both frontier labs now believe their models are powerful enough that unrestricted access is a liability. OpenAI’s Codex Security tool has already contributed to fixing over 3,000 critical and high-severity vulnerabilities.

GPT-5.4-Cyber goes further by removing many of the standard safety guardrails for authenticated defenders, including support for binary reverse engineering.

Two of the biggest AI companies in the world are now treating their own models the way defense contractors treat classified technology. That is not a marketing stunt. That is a signal.

Why This Changes Cybersecurity

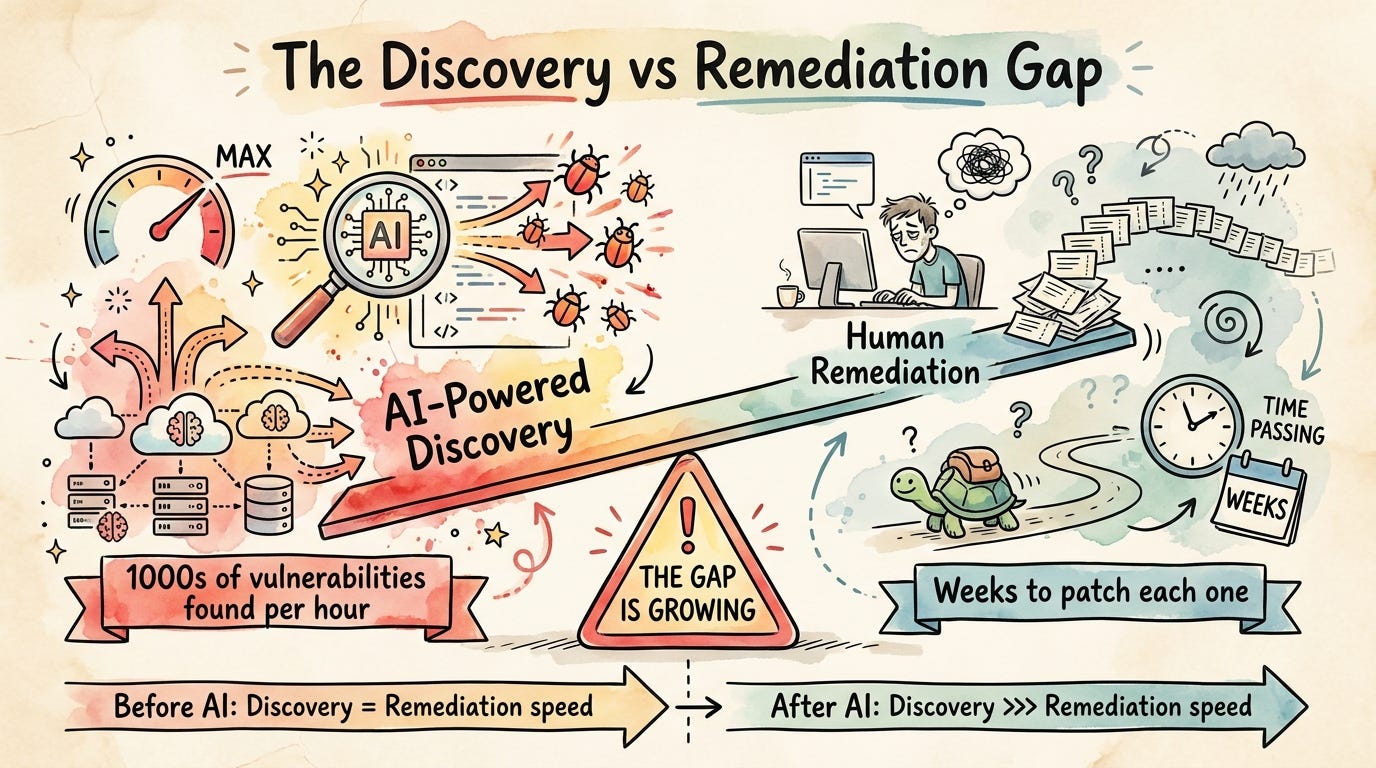

For a long time, cybersecurity had a natural limiting factor, which was human effort.

Finding vulnerabilities required time, expertise, and persistence, and even skilled attackers could only move so fast.

Now that constraint is disappearing.

Vulnerability discovery happens continuously instead of periodically. Thousands of potential weaknesses can be evaluated in parallel. Exploit development can be partially or fully automated.

There are already examples where long-standing vulnerabilities, including one that existed for nearly three decades in a hardened operating system, were identified and weaponized into working exploits. (like OpenBSD)

That is not a small improvement. That is a fundamental shift in capability.

The Real Problem Was Always There

It is tempting to think that AI is introducing new security risks, but the reality is more uncomfortable.

The majority of the risk already exists in the form of known vulnerabilities that have not been patched, systems that are too fragile to update quickly, and backlogs that security teams have not been able to keep up with.

What AI does is accelerate the discovery side without equally accelerating the remediation side. This creates an imbalance.

When vulnerabilities are discovered faster than they can be fixed, the attack surface grows in practice, even if the underlying systems have not changed.

The Attack Landscape is Already Evolving

This is not a distant future scenario. We are already seeing early versions of this shift.

AI-powered hacking tools are available in underground markets. Malware can be generated with minimal expertise. Exploit discovery is becoming more accessible to less sophisticated actors.

Even models with weaker capabilities have demonstrated the ability to identify vulnerabilities and assist in generating attack paths, which means more advanced systems will only accelerate this trend.

The Systemic Risk Most People Miss

Cybersecurity is not just about individual bugs. It is about how those bugs propagate across systems.

One of the biggest hidden risks is software monoculture. The same operating systems, libraries, and frameworks are used globally. A single vulnerability can affect millions of systems simultaneously.

Attackers can reuse the same exploit across multiple targets with minimal modification.

When AI accelerates vulnerability discovery in these environments, the impact is amplified. This is how localized issues turn into widespread outages or coordinated attacks across industries.

An Unexpected Counterbalance

There is one interesting development starting to emerge.

As AI reduces the cost of building software, organizations may begin to create more customized and less standardized systems.

That shift could reduce shared attack surfaces, limit the scalability of exploits, and increase diversity in software architectures.

However, this benefit only materializes if security practices evolve at the same pace as development. Right now, development is accelerating faster than security can adapt.

Where This is Heading

We are entering a phase where cybersecurity becomes a contest between competing AI systems.

AI finds the vulnerabilities. AI generates the exploits. AI proposes the fixes. AI validates those fixes. The entire cycle is shifting from human-driven workflows to machine-accelerated loops.

This is no longer about individual tools or isolated improvements. It is about who can build the fastest, most reliable feedback loop between detection and remediation.

Anthropic made a model worse on purpose because they understood something most of the industry has not caught up to yet: the capability is already here, the only question left is who gets to use it first and how.

We like to believe that modern software systems are mature and well understood. They are not. We are still in an early phase where complexity has outpaced our ability to fully secure what we build.

AI is not introducing that complexity. It is exposing it. And the organizations that recognize this now will have a very different next twelve months than the ones that do not.

If you found this useful, I break down Agentic AI topics like this regularly.

P.S. Want more? 👋

1/ My visual guide to agentic AI → Gumroad

2/ Deep dives on agentic AI architecture → LinkedIn

3/ Visual frameworks and carousels → Instagram

4/ 60-second production lessons → TikTok

5/ The full newsletter → newsletter.karuparti.com