How to actually calculate ROI for modern agentic systems

2026 is the year you either prove AI ROI or lose your budget. In this blog, I share a framework to calculate true costs and measure ROI more accurately.

Agentic AI is rapidly transforming the way businesses operate. These intelligent agents can autonomously perform tasks, make decisions, and interact with users, minimizing the need for human intervention. As organizations increasingly adopt agentic AI apps, measuring their ROI has become critical to justify the investment and ensure their effectiveness.

My 2026 framework builds on my early 2025 approaches, with key additions enterprises now require for production-scale agent deployments. Because in 2026, ROI is no longer only about automation. It must also include reliability, governance, oversight, and the full operating cost of running agents safely at scale.

I’ve been in several meetings over the past year where the conversation follows the same script. The AI team demos an impressive agentic workflow. The POC results look solid.

Then the decision-makers ask the question that stops everything: “What’s the actual ROI on this?”

And here’s where most teams stumble. They pull out frameworks built for 2023-era AI projects, essentially chatbots with better language models. They calculate inference costs, subtract from labor savings, multiply by 100, and present a number. Sometimes it’s impressive. Sometimes it’s not. But it’s almost always wrong.

The problem isn’t the math. The problem is that agentic AI fundamentally broke the traditional ROI model the moment agents started taking actions instead of just making predictions.

Simple AI (no orchestration needed):

User asks question → Model generates answer → Done

Agentic AI (requires orchestration):

User requests ticket resolution → Router agent determines ticket type → Specialist agent is invoked → Agent calls knowledge base API → Agent calls identity management system → Agent updates ticketing system → Agent routes to human if confidence is low → Completion handler logs the interaction

Here’s what makes agentic AI ROI different: you can’t calculate it accurately without the people who actually built the system.

Finance teams can’t estimate orchestration overhead.

Product managers can’t quantify tool execution costs.

Only the technical teams who designed the agent architecture, implemented the evaluation pipelines, and operated the system in production understand the actual cost variables.

This is why so many ROI projections fall apart six months into deployment - the business case was built without the practitioners in the room.

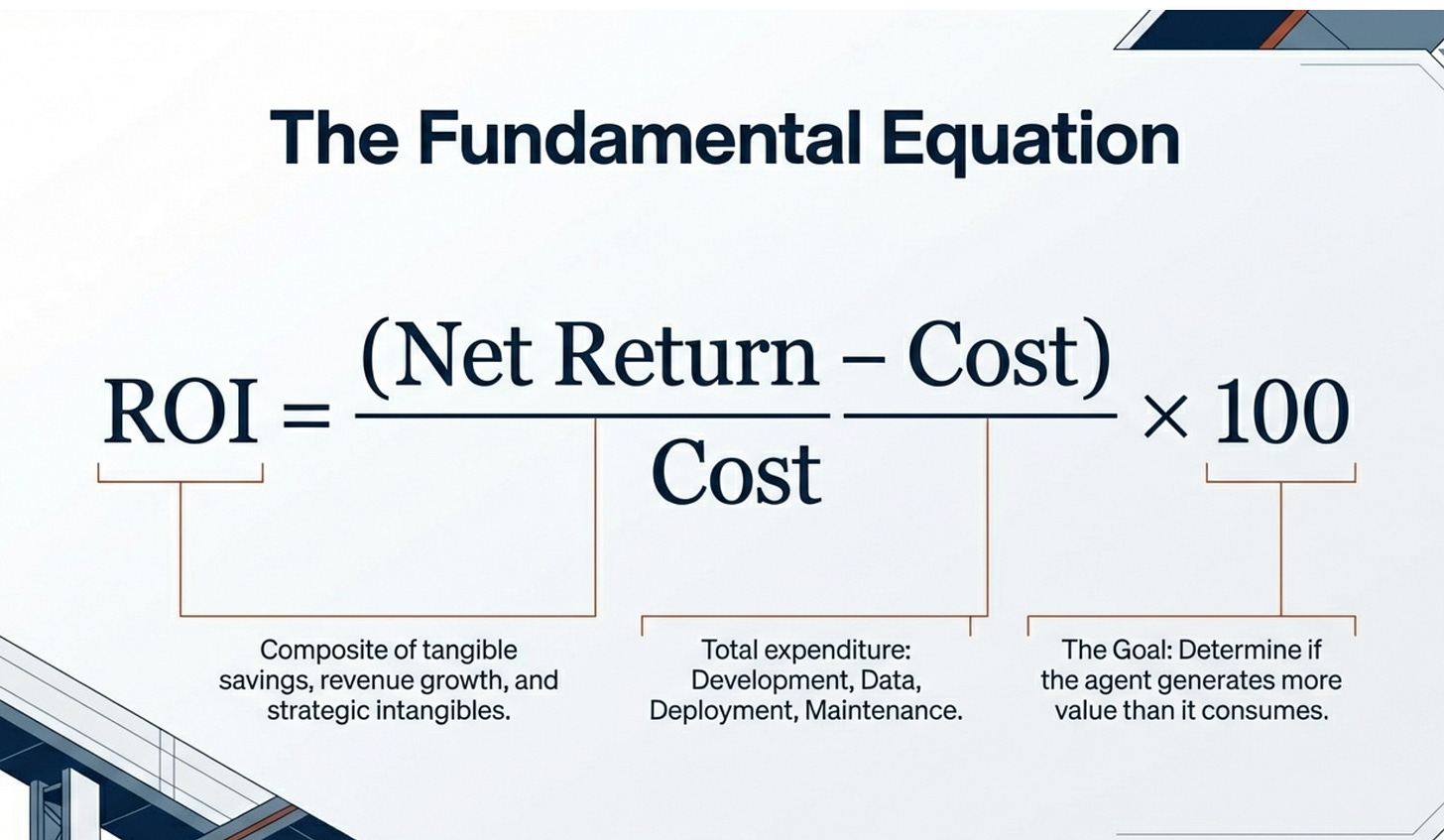

The ROI formula hasn’t changed. What you put into it has.

The fundamental formula remains:

ROI = (Net Return from Investment − Cost of Investment) / Cost of Investment × 100

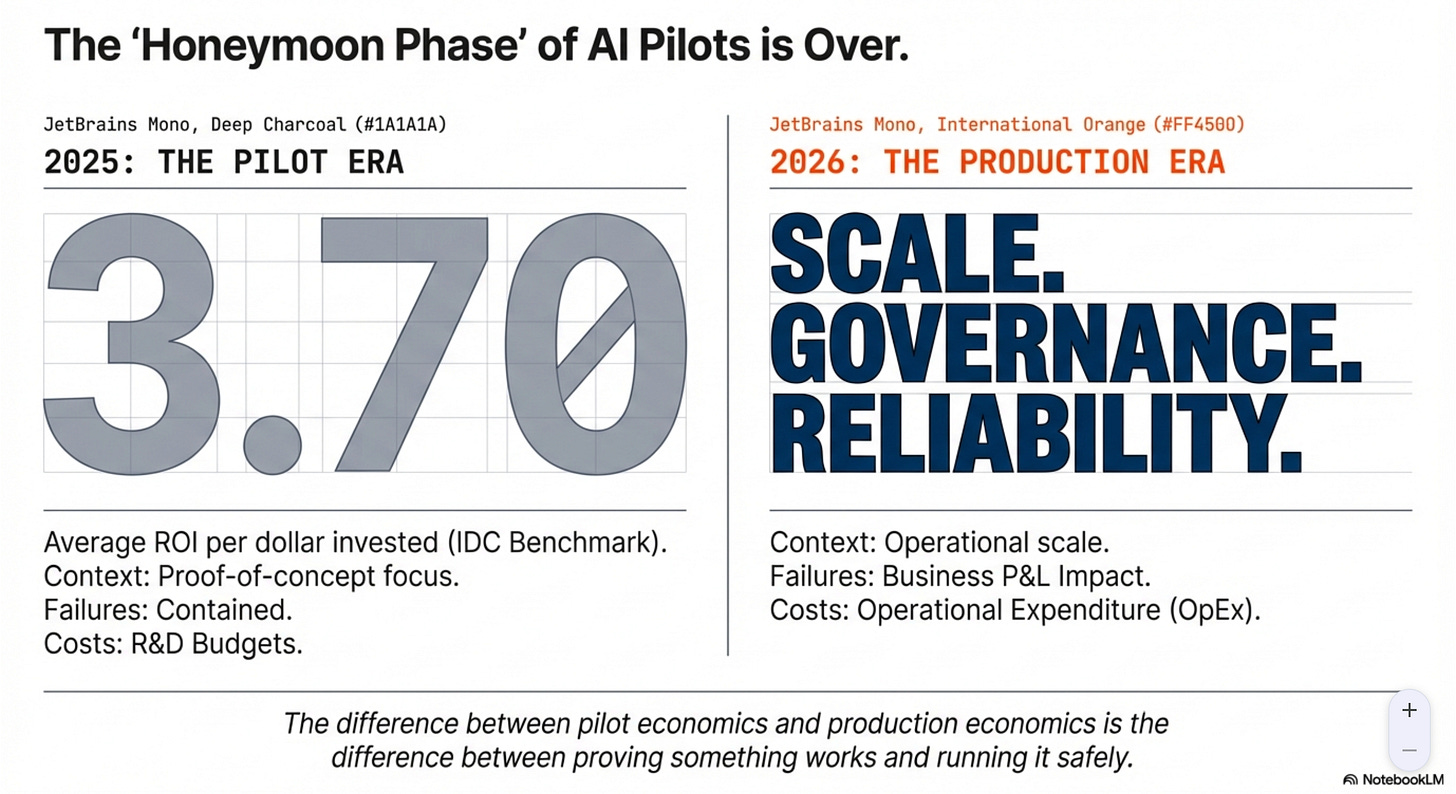

IDC’s early 2025 research reported strong returns: an average ROI of $3.70 for every dollar invested in AI, with top performers reaching $10 for every dollar invested. These numbers appear in every business case I review.

But agentic AI introduces entirely new cost categories and risk categories that must be included in ROI calculations. The agents that enterprises are deploying in 2026 bear little resemblance to the predictive models and chatbots that dominated 2023 and 2024.

And accurately modeling those costs requires input from the architects and engineers who understand what actually runs in production.

1. Why Traditional AI ROI Frameworks Don’t Work for Agents

Traditional AI ROI was incredibly simple. You had a model that automated some human task. You calculated the cost savings from that automation. You subtracted the cost of running the model. Done. Teams could actually budget for it. Everyone moved forward.

Agentic AI demolished that simplicity because agents don’t just predict outcomes or generate content. They execute workflows. They call APIs (tools). They make decisions that cascade through enterprise systems. They interact with customers directly. They fail in ways that impact business operations. They require human oversight structures that didn’t exist before.

This means your cost stack is no longer just “model inference plus engineering time.” It’s an entirely different animal that includes:

Orchestration overhead that coordinates multi-step workflows

Tool execution costs that can exceed inference spend

Evaluation pipelines running continuously in production

Human-in-the-loop workflows for escalation and review

Monitoring infrastructure with distributed tracing

Incident response protocols for agent failures

Compliance controls and audit capabilities

Expected business impact of failures

Most organizations are still using ROI frameworks that capture maybe 40% of the actual costs and maybe 60% of the actual benefits.

When you’re pitching a $500K agent deployment to your CTO, being off by that margin isn’t just imprecise. It’s credibility-destroying.

2. What Actually Matters in 2026

The ROI conversation has fundamentally shifted from “how much does this save us?” to “what does it actually cost us to run this safely at scale, and what business outcomes does it deliver?”

That shift is critical because it reframes the entire discussion. You’re no longer justifying an automation project. You’re justifying a production system that needs reliability, governance, oversight, and continuous evaluation as standard operating capabilities.

Here’s what a modern ROI framework needs to account for:

Business outcomes delivered (not just tasks automated)

Complete operating cost (not just inference)

Reliability at production scale (not POC success rates)

Human oversight requirements (not theoretical autonomous operation)

Continuous evaluation as core capability (not one-time testing)

Miss any of these dimensions and your business case falls apart somewhere between pilot and production. I’ve seen it happen more times than I can count. The agent works great in testing. Budget is approved on optimistic projections. Six months into production, you’re burning 3x the budgeted operational costs and the business is questioning whether the whole thing was worth it.

What “business outcomes” actually means in practice:

When I talk about measuring business outcomes instead of outputs, here’s what enterprises are actually tracking across their agent deployments:

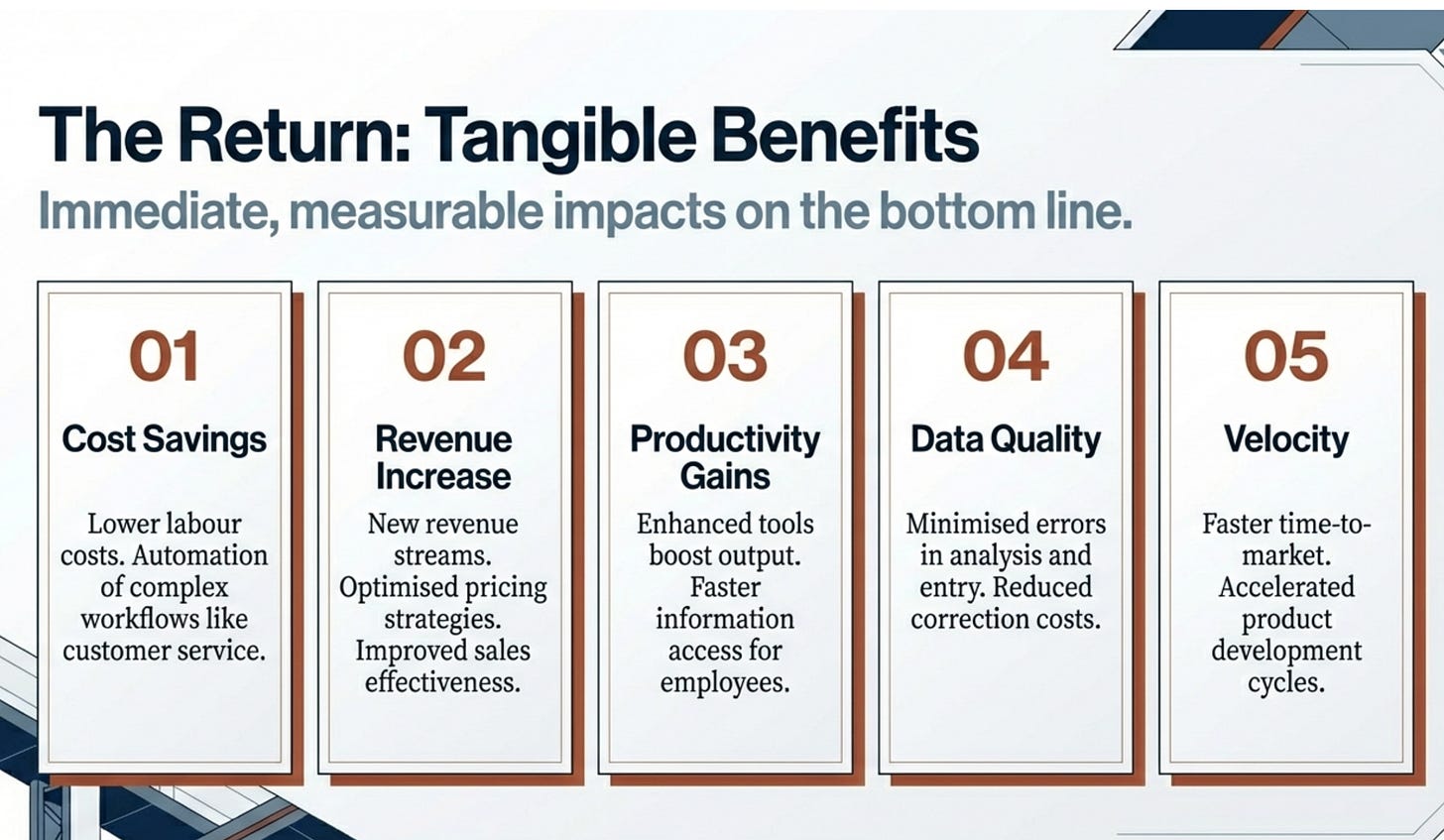

Tangible benefits you can put in your P&L:

Cost savings from workflow automation across customer service, IT operations, finance, and internal processes

Revenue increases from improved sales execution, personalization, and proactive retention

Productivity gains as agents handle coordination and execution, freeing employees for higher-value work

Data quality improvements through reduced manual errors in document processing, reporting, and operational workflows

Improved customer satisfaction from faster resolution, proactive support, and 24/7 availability

Faster time to market through automation of onboarding, approvals, and operational execution

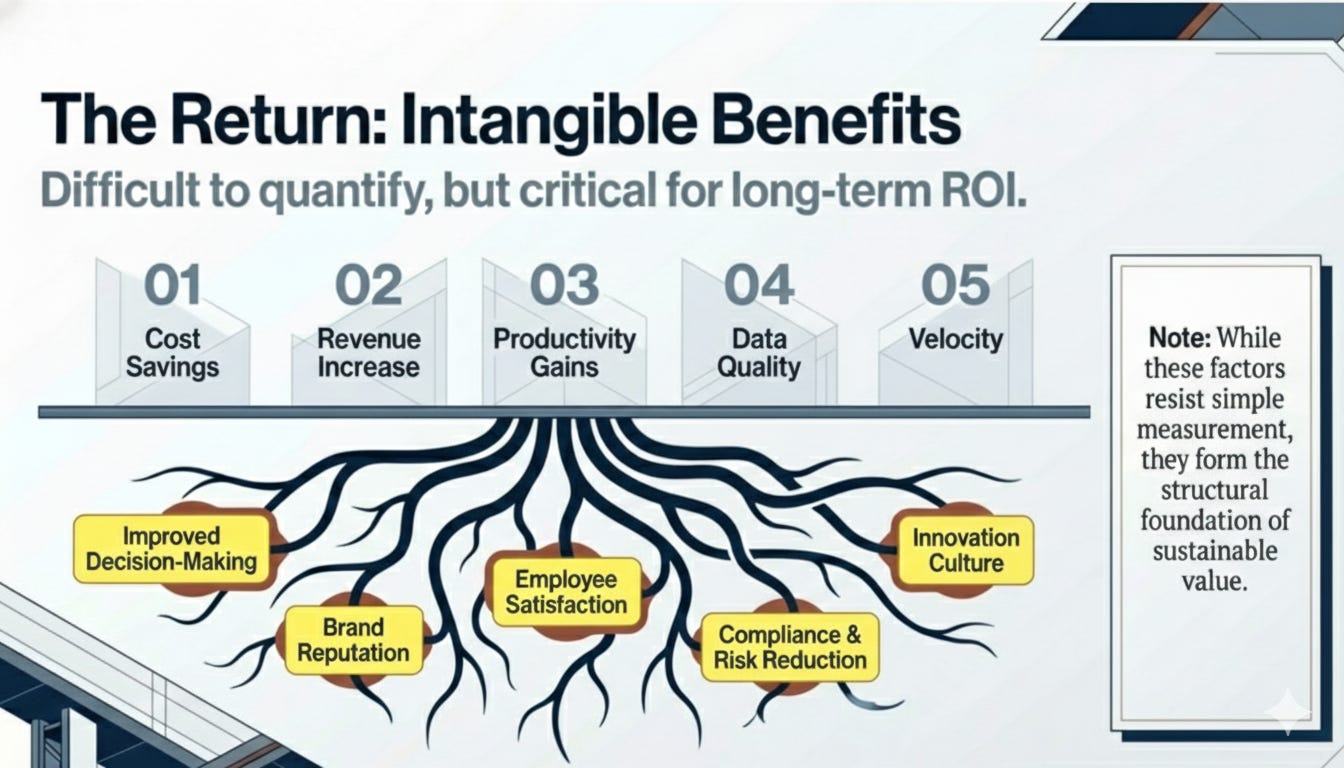

Intangible benefits that compound over time:

Improved decision making as agents synthesize enterprise data and provide actionable recommendations

Enhanced brand reputation when reliable AI assistants strengthen customer trust

Increased employee satisfaction as repetitive work decreases, improving morale and retention

Improved compliance posture when agents support regulatory adherence paired with governance controls

Increased innovation capacity as operational burden decreases

The challenge most teams face is quantifying the intangibles. You can’t put “enhanced brand reputation” directly into an ROI calculation, but you can measure the customer retention improvements and NPS score changes that result from it. You can’t easily value “increased innovation,” but you can track the number of new product features shipped or the reduction in time-to-market for new capabilities.

3. The New Cost Stack Nobody Talks About

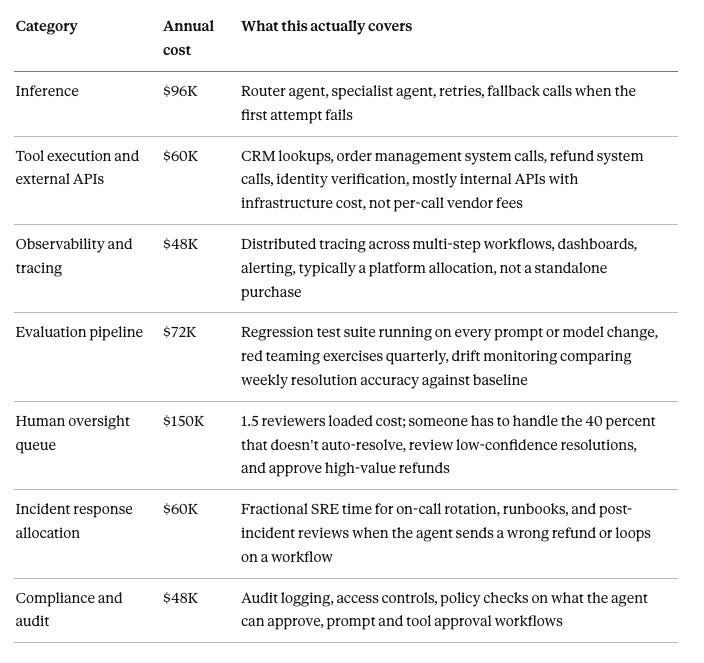

Let me break down what enterprises are actually spending to run agents in production, because this is where most ROI models go sideways.

Your build costs are the visible part of the iceberg. Engineering salaries, orchestration design (coordinating how agents, tools, and workflows interact), cloud development environments, dataset licensing, retrieval indexing, integration with enterprise systems. Most teams budget for this reasonably well because it’s similar to traditional software development.

Then you hit run costs, and this is where things get interesting. Yes, you have model inference costs, but you also have tool execution costs that can exceed your inference spend depending on your architecture. Every API call, every database query, every external system integration has a cost. Retries and error handling aren’t free. If your agent needs to call three different APIs to complete a workflow and one of them is rate-limited, you’re paying for all those retry attempts.

What almost nobody budgets adequately for is oversight costs. Human escalation workflows don’t build themselves. Review and approval processes require infrastructure. Exception handling needs systems, monitoring, and people watching dashboards. If your agent is handling customer-facing workflows, someone needs to be available when it escalates. That’s headcount, not just compute.

Then there’s evaluation costs, which most teams treat as a pre-deployment phase instead of an ongoing operational requirement. Regression testing pipelines, red teaming exercises, safety benchmarking, operational dashboards, distributed tracing infrastructure - all of this runs continuously in production, not just during development.

Finally, you have risk costs that are incredibly difficult to quantify but absolutely need to be in your model. What’s the expected business impact if your agent hallucinates and sends incorrect information to a customer? What’s the compliance exposure if it accesses data it shouldn’t? What’s the reputational cost if it fails publicly?

That IT helpdesk agent that generates $288K in labor savings? It costs $150K annually to run it safely. Your CFO needs both numbers, and they need to understand why the operational overhead is 52% of the gross benefit.

4. Representative Scenario That Show the Full Picture

Call Center Ticket Resolution Agent

Scenario. You deploy an agent that resolves “where is my order” and “refund status” tickets end to end.

What the POC ROI model says

Labor saved:

40,000 tickets per month

6 minutes average handle time

60 percent auto-resolved

$35 per hour blended agent cost

Monthly labor savings: 40,000 × 0.60 × 6 minutes = 144,000 minutes = 2,400 hours × $35 = $84,000 per month. $1.01M per year.

Inference cost estimate: $8,000 per month.

POC story: “We spend $8K to save $84K. The ROI is 10x.”

Benefits: $1.01M per year

What production actually costs

Costs: $534K per year

Net return: $1.01M − $534K = $476K per year.

Still a strong investment. But the story changed from “10x ROI” to “roughly 2x ROI with a $534K operational commitment.” That’s a fundamentally different budget conversation.

Note: These numbers are representative. Your costs will vary based on agent complexity, ticket volume, and existing infrastructure. Use the categories, not the specific dollar amounts, as your starting framework.

What breaks if you skip the oversight

In Q3 your model provider pushes an update. Your agent starts misclassifying return-window disputes as standard refund requests and auto-approving them. Without regression testing catching the drift and without a human review queue flagging the spike, you process $200K in incorrect refunds over three weeks before a finance analyst notices the anomaly in monthly reconciliation.

5. The Framework: How to Actually Calculate This

Stop pitching ROI as a single number on a slide. Start treating it as a production discipline that evolves over the lifecycle of your agent deployment.

Step 1: Define outcomes, not outputs

Don’t measure “tasks automated” or “API calls made” or “conversations handled.” Measure cycle time reduction, cost per transaction, revenue lift, customer satisfaction improvement, employee productivity gains. Your CTO doesn’t care that your agent completed 50,000 workflows. They care that you reduced average resolution time by 35% and improved CSAT scores by 12 points.

Step 2: Establish real baselines before deployment

You cannot calculate ROI without knowing where you started. Measure actual KPI performance for at least one full business cycle before deploying agents. Not estimates based on what you think is happening. Not benchmarks from industry reports. Actual operational data from your systems.

Step 3: Run controlled rollouts that generate comparable data

Your options:

Shadow mode where the agent runs in parallel with humans

A/B testing where some workflows go through the agent and others don’t

Phased deployment where you can compare performance across different user groups

No big bang launches where you can’t isolate the agent’s impact from everything else changing in your operations.

Step 4: Account for reliability in your projections

Track task completion rates, escalation frequency, rework requirements, and expected incident costs. If your agent completes 85% of workflows successfully, escalates 10%, and fails on 5%, all three outcomes have different cost profiles. Your ROI model needs to reflect that distribution, not assume 100% success.

Step 5: Measure over 12-18 months, not at launch

Agent value compounds through iteration. Your Q1 performance after deployment is not representative of steady-state performance. The agent gets better as you tune it. Your team gets better at operating it. Your organization gets better at designing workflows around it. Measuring ROI at month 3 misses 60% of the value creation.

Step 6: Build continuous evaluation into operations

Evaluation isn’t a pre-deployment phase that ends when you go live. It’s an ongoing operating capability that runs in production, catches regressions, validates new capabilities, and ensures your agent maintains performance as your business changes. Budget for it. Staff for it. Measure it.

6. What IDC Got Right in 2025 (and What 2026 Requires)

IDC’s early 2025 research showed an average ROI of $3.70 for every dollar invested in AI, with top performers reaching $10 for every dollar. These numbers got cited in every business case last year, and they were directionally useful as anchors for traditional AI investments.

But 2025 was the year of agentic AI pilots. 2026 is the year of production deployments at scale. And that shift exposes what those aggregate numbers missed.

What those benchmarks didn’t account for:

Orchestration overhead in production agent systems. When you’re coordinating multiple agents, managing complex tool chains, handling state across async workflows, and building human-in-the-loop processes that didn’t exist before, you lose 10-20% efficiency to coordination complexity.

The cost of continuous evaluation as an operating capability, not a pre-deployment phase. Early 2025 ROI models treated testing as a one-time expense. Production agents need ongoing regression testing, safety benchmarking, and performance monitoring.

Human oversight infrastructure that scales with agent adoption. The first agent deployment might need one person monitoring escalations. The tenth deployment needs a team, workflows, and governance processes.

Risk costs from agent failures in customer-facing workflows. When agents were in pilot mode, failures were contained. When they’re handling 40% of your support volume, failures have real business impact that must be modeled.

What this means for 2026 ROI frameworks:

Your ROI should be modeled as a range, not a point estimate. Budget conservatively assuming you’ll hit the low end of that range. Celebrate when you exceed it. Don’t promise your CFO 400% returns based on top-performer benchmarks from 2025 when you’re deploying your first production agent in 2026.

The difference between pilot economics and production economics is the difference between proving something works and running it safely at scale. IDC’s numbers capture the former. Your 2026 business case needs to account for the latter.

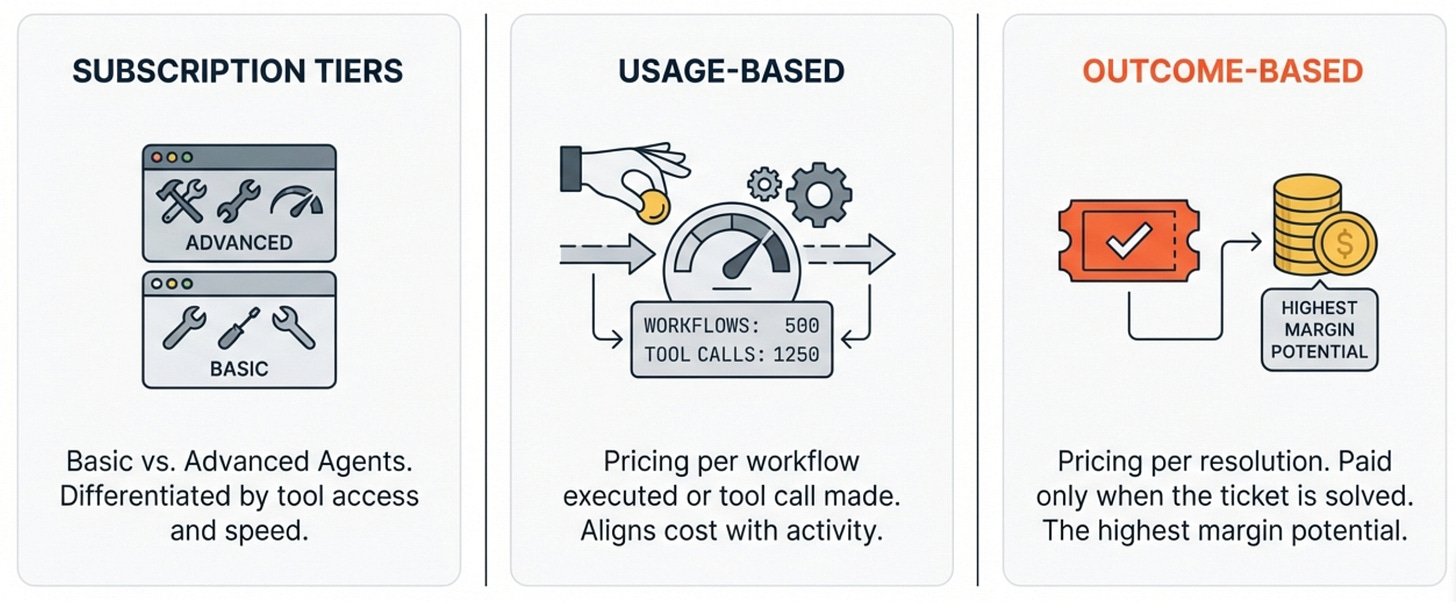

7. The Revenue Side: New Business Models

Most ROI discussions focus entirely on cost reduction, but agentic AI is also creating entirely new revenue models that fundamentally change the economics of software.

Traditional SaaS pricing is based on per-seat licenses. You pay for access regardless of usage. Agentic AI is killing that model because agents don’t have seats. They have outcomes.

I’m seeing three pricing models emerge that change how you calculate revenue from agent deployments:

1. Subscription tiers where customers pay recurring fees for access to agentic capabilities at different performance levels. Basic tier gets you standard agents with limited tool access. Premium tier gets you advanced agents with broader capabilities and faster response times.

2. Usage-based pricing where you charge per workflow executed, per tool call made, per transaction completed. This aligns cost directly with value delivered and scales naturally with customer adoption.

3. Outcome-based pricing where you charge for results, not access. Your agent resolves a support ticket? You get paid. It doesn’t resolve the ticket? You don’t get paid. This is terrifying for traditional software companies and incredibly attractive for customers.

This shift matters for ROI because if you’re building agents for external customers, you’re not just saving internal costs. You’re creating entirely new revenue streams with different margin profiles and different scaling characteristics than your current business.

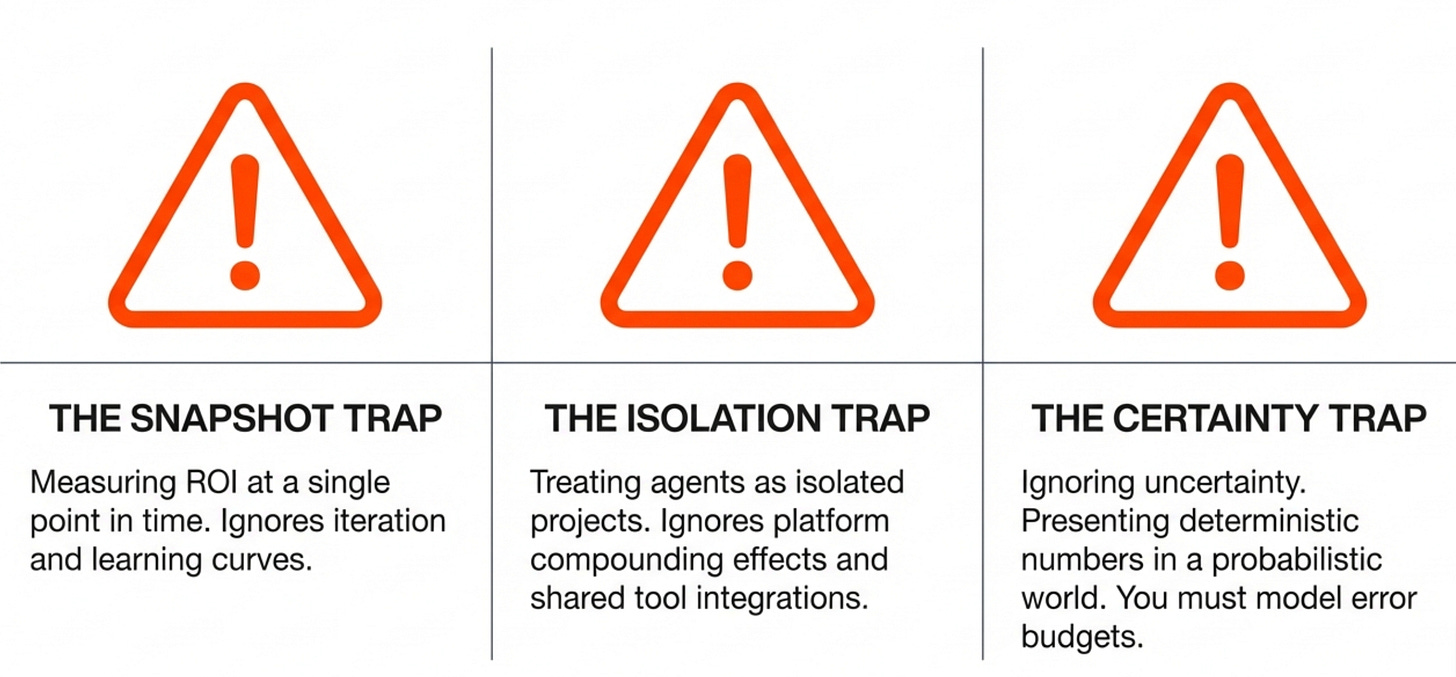

8. Three Pitfalls That Kill Agent ROI

Pitfall 1: Measuring ROI at a single point in time

Agents improve through iteration. Your month-one performance is not your month-twelve performance. If you measure ROI at launch and decide the project failed, you’re abandoning it right before it would have delivered value. Conversely, if you measure at launch and declare success, you might be missing degradation that happens as your workload characteristics change.

Pitfall 2: Treating agents as isolated projects

Agent deployments create platform effects. Your first successful agent proves out your orchestration framework, evaluation infrastructure, monitoring capabilities, and governance processes. Your second agent leverages all of that and deploys faster. Your third agent reuses tools and integrations from the first two. If you evaluate ROI project-by-project, you’re missing the compounding value of building an agent platform.

Pitfall 3: Ignoring uncertainty in your projections

LLMs hallucinate. APIs fail. Users break things in unexpected ways. Your ROI model needs error budgets and contingency planning. If you present a business case with deterministic outcomes and precise numbers, you’re either lying or you haven’t run enough production systems.

What This Means for Your Next Business Case

If you’re pitching agentic AI to leadership in 2026, here’s your checklist:

Business outcomes clearly defined and measurable - Not “automate customer service” but “reduce average resolution time from 8 minutes to 5 minutes while maintaining 4.2+ CSAT scores”

Baseline KPIs measured over a full business cycle - Not estimated. Measured.

Full cost stack modeled - Build, run, evaluation, oversight, and risk costs included

Reliability targets set - Expected escalation rates, rework requirements, and incident frequency planned for

Oversight workflows designed and staffed - Not theoretical autonomous operation

Evaluation pipelines built as production infrastructure - Not pre-deployment testing that ends at launch

ROI tracked as a range - Conservative low-end projections and stretch high-end targets, not a single number

Skip any of these and you’re not building a business case. You’re building a reason for your CTO to say no six months into production when the costs don’t match your projections.

9. Final Thoughts

Agentic AI works. I’ve seen it deliver transformational value in dozens of enterprise deployments across financial services, healthcare, telecommunications, and media. The technology is real. The business value is real.

But the ROI frameworks most organizations are using were built for a different kind of AI system. They don’t account for the operational complexity, oversight requirements, evaluation infrastructure, and risk management that production agents require.

In 2026, a credible ROI model for agentic AI needs to measure business outcomes, account for full operating costs, plan for reliability at scale, design for human oversight, and treat continuous evaluation as standard capability. Include your technical practitioners in this ROI calculations, if you haven’t built you wouldn’t know the actual costs associated with it.

Get that framework right, and you can justify agent investments that scale. Get it wrong, and you’ll be explaining budget variances while your competitors are deploying their fifth agent.

The choice is yours.

What's the biggest cost category your team has underestimated (or completely missed) in an agentic AI business case?